The modern data centre is an environmental disaster disguised as a digital miracle. As we hit the physical limits of Moore’s Law, the tech industry has been frantically stacking chips and burning gigawatts to keep up with the insatiable hunger of neural networks. But a small group of bioengineers and venture capitalists are now betting on a radical alternative that makes the most advanced NVIDIA H100 look like a pocket calculator. They are building computers out of living human brain cells. This isn’t a science fiction trope about cyborgs. It is a cold, calculated move to replace energy-inefficient silicon with "wetware"—synthetic biological intelligence grown in a lab.

The Efficiency Crisis in the Server Rack

To understand why anyone would want to plug a cluster of neurons into a motherboard, you have to look at the power bill. Human brains are the most efficient processors in the known universe. A human brain operates on about 20 watts of power—roughly the same amount needed to run a dim lightbulb. In contrast, a modern AI cluster training a large language model consumes enough electricity to power a small city.

The problem is the architecture. Silicon chips are built on the Von Neumann model, where data constantly travels back and forth between the processor and the memory. This movement creates heat and consumes massive amounts of energy. Biological neurons don't work that way. In a brain, the processor and the memory are the same thing. By using "organoids"—three-dimensional structures of living tissue derived from human stem cells—startups are attempting to replicate this efficiency.

Growing the Motherboard

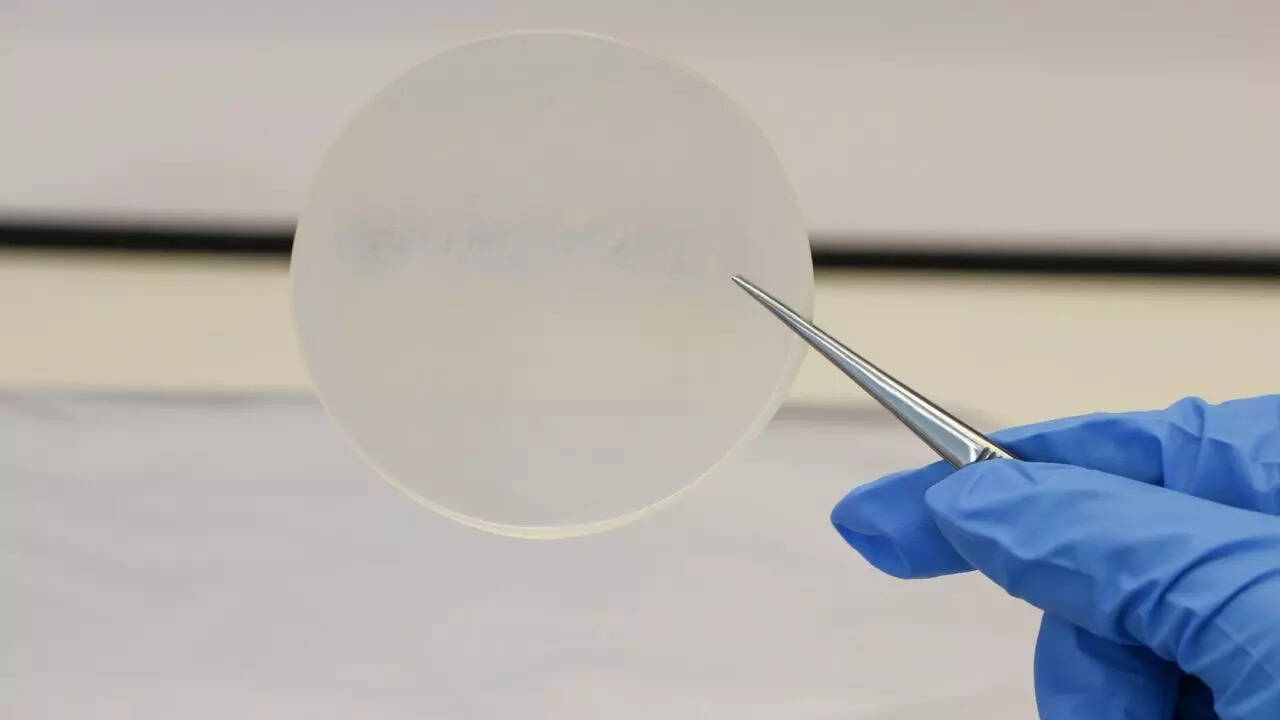

The process starts with skin or blood cells, which are reprogrammed into induced pluripotent stem cells. These cells are then nudged to grow into neurons. These aren't "thinking" brains in a jar; they are simplified clusters of cells that can be trained to process electrical signals.

The interface is where the engineering gets difficult. The neurons are grown on top of Multi-Electrode Arrays (MEAs). These arrays act as the bridge between the biological and the digital. They send electrical pulses into the organoid to "input" data and read the resulting electrical activity as "output."

Bioputing companies are already showing results. In early experiments, these biological processors learned to play the arcade game Pong faster than traditional AI algorithms. They didn't do it because they "wanted" to play; they did it because the system was designed to provide electrical stimulation as feedback. The cells naturally organized themselves to minimize "unpredictable" inputs, essentially learning through a biological drive for stability.

The Survival Costs of Living Hardware

Maintaining a traditional server room involves high-end cooling systems and fire suppression. Maintaining a wetware data centre involves an incubator. These cells need to be fed a nutrient-rich "soup" and kept at exactly 37 degrees Celsius. If the power goes out, the processor doesn't just shut down. It dies.

This introduces a level of fragility that the enterprise tech world isn't used to. You can’t just swap out a dead neuron cluster like a faulty hard drive. Each organoid is unique. While silicon chips are identical by design, biological systems possess inherent variability. This "noise" is often seen as a drawback, but some researchers argue it is actually the secret to true creativity and problem-solving.

The Ethical Minefield of Synthetic Intelligence

We are quickly approaching a point where the technical hurdles are being eclipsed by moral ones. If a cluster of human neurons can learn, solve problems, and respond to its environment, at what point does it deserve a different status than a piece of hardware?

Current organoids lack sensory organs, a nervous system, or anything resembling consciousness. They are small, roughly the size of a mustard seed. However, the roadmap for this technology involves scaling up. As these biological computers become more complex, the line between "biological material" and "sentient entity" begins to blur.

There is also the question of "neural donor" rights. If your cells are used to create a high-performing biological computer that generates billions in profit for a corporation, do you own the intellectual property of your "off-spring" neurons? The legal frameworks for this simply do not exist. We are applying 20th-century property laws to a 21st-century biological frontier.

Chasing the 100,000x Advantage

The business case for wetware is driven by a single, staggering number. Some estimates suggest that biological computing could be 100,000 times more energy-efficient than silicon for specific AI tasks. For a company like Google or Microsoft, reducing a billion-dollar energy bill to a few thousand dollars is a competitive advantage that outweighs almost any ethical or technical risk.

We are seeing a shift from "artificial" intelligence to "synthetic" intelligence. The goal is no longer to make a machine that acts like a human, but to use the building blocks of humanity to build a better machine.

The Hybrid Future of the Data Centre

The most likely outcome isn't the total replacement of the silicon chip. Instead, we are looking at a hybrid model. Silicon is excellent at high-speed math and rigid logic. Biology is excellent at pattern recognition and learning with minimal data.

A future server rack might contain a standard CPU for managing file systems, a GPU for rendering graphics, and a BPU (Biological Processing Unit) for handling complex, intuitive AI tasks. This allows the system to offload the most energy-intensive tasks to the organoids, while the silicon handles the grunt work.

The Practical Obstacles to Scale

While the lab results are impressive, scaling this to a commercial level is a nightmare of logistics. You need a sterile supply chain for nutrients. You need specialized waste management for the biological byproducts. You need a way to standardize the "training" of cells that are, by their very nature, non-standard.

Investors are pouring money into this because the alternative is a dead end. We cannot keep doubling the power consumption of our digital infrastructure every few years. The heat generated by our current path is unsustainable. If we want smarter machines, we may have to accept that they need to be alive, at least in a cellular sense.

Rewriting the Stack

Software developers will have to learn an entirely new way of coding. You don't "program" an organoid; you "train" it through reinforcement. The traditional stack of languages like Python or C++ will need a translation layer to communicate with biological tissues. We are talking about "wet" coding—where the feedback loops are chemical and electrical rather than purely binary.

This shift will redefine the role of the data centre engineer. The job will require as much knowledge of microbiology as it does of network architecture. The "clean room" will no longer just be about keeping dust off the mirrors; it will be about keeping bacteria from infecting the processors.

The move toward wetware is an admission of a hard truth. We have spent seventy years trying to build a machine as capable as a brain, only to realize that the most efficient way to get that performance is to use the brain’s own architecture. The silicon era isn't over, but it has finally met its biological match.

The next time you query an AI, the answer might not come from a humming rack of hot metal, but from a quiet, warm vat of living cells that are "thinking" for a fraction of a cent.

Audit your current ESG goals and energy projections for the next five years. If your roadmap assumes silicon efficiency will continue to scale at historical rates, you are likely underestimating your future costs. Investigate the startups currently offering "BPU-as-a-service" pilots to understand the latency and training differences of biological integration before the hardware shift leaves your infrastructure obsolete.