The valuation of Nscale at $14.6 billion, anchored by Nvidia’s strategic investment, signals a transition from the "experimental" phase of AI deployment to the "industrial" phase of sovereign compute. This valuation is not a reflection of simple cloud services revenue; it is a calculation of the scarcity of high-density power, the vertical integration of the GPU supply chain, and the compression of the "Time-to-Training" metric for enterprise LLMs. To understand Nscale’s position, one must analyze the structural deficits in legacy hyperscale architecture—specifically AWS, Azure, and GCP—which were built for the general-purpose CPU era and are currently struggling with the thermal and electrical density requirements of Blackwell-class clusters.

The Three Pillars of Discrete Compute Infrastructure

The emergence of "GPU-first" clouds like Nscale is driven by three distinct structural advantages that legacy providers cannot easily replicate without massive capital expenditure and localized retrofitting.

1. Thermal and Power Density Engineering

Standard data center racks typically operate between 5kW and 15kW. Modern AI workloads involving H100 or B200 clusters demand 60kW to 100kW per rack. Nscale’s valuation rests on its ability to provide liquid-cooling-ready environments that prevent thermal throttling. When a GPU throttles, the cost per compute hour increases because the time-to-result extends while power draw remains high. Nscale’s infrastructure is optimized for the Compute-to-Cooling Ratio, ensuring that a higher percentage of total facility power (PUE) is directed toward the silicon rather than the fans and chillers.

2. Supply Chain Seniority

Nvidia’s investment in Nscale creates a recursive feedback loop. By backing the infrastructure provider, Nvidia secures a guaranteed, high-performance destination for its hardware. For Nscale, this relationship mitigates the single greatest risk in the sector: lead-time volatility. While Tier 2 providers wait 26 to 52 weeks for H100 allocations, Nscale’s status as a "Preferred Partner" ensures capital can be deployed into generating assets immediately. This reduces the Weighted Average Cost of Capital (WACC) because the risk of idle data center floor space is virtually eliminated.

3. Sovereign and Private Cloud Requirements

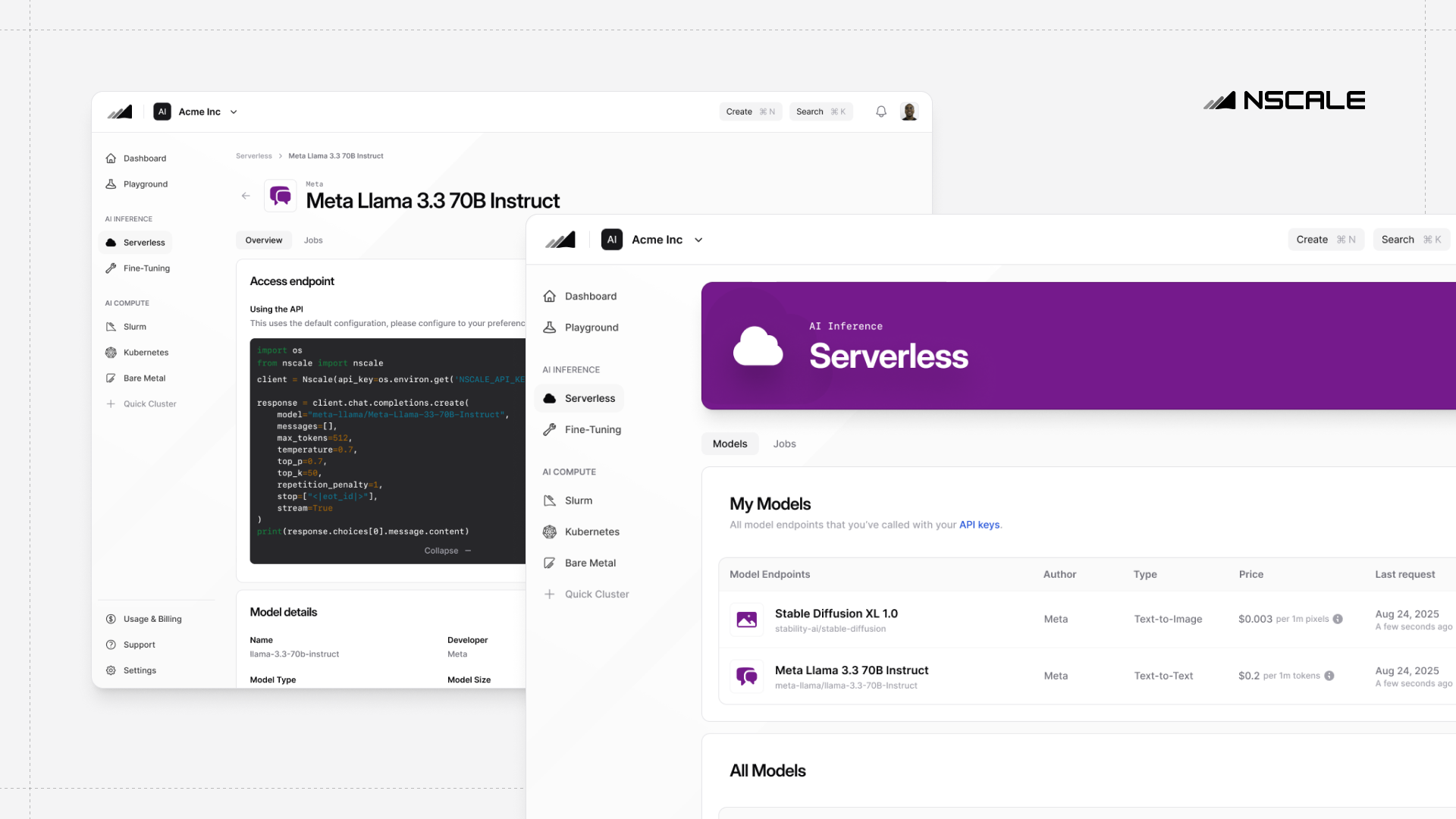

Enterprises are increasingly moving away from public clouds for model training due to "Data Gravity" and security concerns. Nscale operates as a specialized "Virtual Private Cloud" (VPC) for AI, offering bare-metal performance without the hypervisor overhead found in traditional virtualization. This removes the "noisy neighbor" effect, where shared resources on a public cloud degrade the deterministic performance required for large-scale distributed training (e.g., NCCL/RDMA communication between nodes).

The Cost Function of Model Training

To quantify Nscale’s value proposition, one must look at the total cost of ownership (TCO) for a company training a 70B+ parameter model. The cost is not merely the hourly rate of the GPU; it is a function of:

$Total Cost = (Hardware Hourly Rate + Interconnect Overhead) \times (Training Time / Effective MFU)$

- MFU (Model Flops Utilization): Nscale targets a higher MFU by optimizing the InfiniBand fabrics between nodes. If a legacy cloud has 40% MFU and Nscale achieves 55%, the effective cost of the Nscale cluster is 27% lower, even if their hourly sticker price is higher.

- Interconnect Bottlenecks: Training performance scales non-linearly with the number of GPUs. The bottleneck is rarely the chip itself; it is the "All-Reduce" operation where GPUs swap data. Nscale’s architecture focuses on non-blocking, fat-tree topologies that minimize latency spikes during these synchronization steps.

Mapping the Competitive Arbitrage

Nscale is performing a classic infrastructure arbitrage. They are borrowing capital to secure specialized real estate and silicon, then retailing that capacity to entities that lack the credit rating or the technical expertise to build their own facilities.

| Variable | Legacy Hyperscaler | Nscale / Specialized AI Cloud |

|---|---|---|

| Primary Architecture | CPU-centric, Virtualized | GPU-centric, Bare Metal |

| Cooling Method | Air-cooled (standard) | Liquid-to-Chip / Immersion Ready |

| Network Fabric | Ethernet (standard) | InfiniBand / Ultra Ethernet |

| Customer Profile | General SaaS/Web | AI Labs, BioTech, Finance |

The $14.6 billion valuation assumes that the demand for "training-as-a-service" will remain inelastic even as inference begins to move toward the edge. This is a calculated bet on the Model Scaling Laws, which suggest that as long as more data and more compute lead to better intelligence, the "Compute Hunger" will continue to outpace Moore’s Law.

The Bottleneck of Grid Interconnection

While silicon availability is the current headline constraint, the long-term limitation on Nscale’s growth is power grid interconnection. The physical time required to bring 100MW of power to a site often exceeds 36 months in major hubs like Northern Virginia or Dublin. Nscale’s strategy involves identifying "stranded power" or "behind-the-meter" energy sources.

By co-locating near renewable energy sources or industrial sites with existing high-voltage infrastructure, they bypass the queue for municipal grid upgrades. This creates a Geographic Moat. Once a site is powered and populated with Blackwell chips, it becomes a localized monopoly for low-latency compute in that region.

Risks to the Valuation Thesis

The primary risk to this valuation is the potential "Inference Shift." If the industry moves from massive, centralized training runs to efficient, localized inference on smaller models (SLMs), the need for 100MW GPU "Mega-clusters" may soften.

Furthermore, the emergence of custom ASICs (TPUs, Inferentia, Trainium) poses a threat to the Nvidia-centric monoculture that Nscale currently leverages. If a significant portion of the market shifts to non-Nvidia hardware, Nscale’s specialized interconnects and software stacks may require expensive reconfiguration.

Strategic Execution Framework

For Nscale to defend this valuation, the operational focus must shift from "procurement" to "software-defined infrastructure." This involves:

- Automated Failover Orchestration: In a cluster of 10,000 GPUs, hardware failure is a daily occurrence. The ability to automatically reroute traffic and restart checkpoints without human intervention is the difference between 90% and 99% uptime.

- Energy Hedging: As one of the largest power consumers in their respective regions, Nscale must act as an energy trader, using Power Purchase Agreements (PPAs) to lock in rates and mitigate the volatility of the spot market.

- Vertical Software Integration: Offering a proprietary "Kubernetes for GPUs" layer that abstracts the complexity of distributed training, making it easier for mid-market enterprises to migrate away from AWS.

The $14.6 billion figure is a vote of confidence in the physical layer of the internet. It acknowledges that AI is not just code; it is a massive, heat-generating, power-hungry industrial process. The winners of this era will not just be those who write the best algorithms, but those who own the most efficient "Compute Factories."

Would you like me to conduct a comparative analysis of the energy PUE (Power Usage Effectiveness) between Nscale's liquid-cooled sites and standard AWS Tier 3 data centers?